The POC works. The model responds well in the demo. The team is enthusiastic. Now what?

Putting an LLM into production in a real business context is an operation of categorically different complexity from deploying a classic API. Teams that discovered this after the fact paid a high price: off-topic responses in production, exploding inference costs, inability to audit a problematic behaviour, undetected regression after a prompt change.

LLMOps is the set of practices that prevent these pitfalls.

What makes LLMs different from other software components

A classic software component is deterministic: the same inputs produce the same outputs. An LLM is probabilistic: the same inputs produce outputs that vary according to temperature, sampling, and the internal state of the model.

This property fundamentally changes how you test, monitor and maintain the system.

No classic unit tests. You cannot write assertEqual(llm.generate(prompt), expected_output). You write probabilistic evaluations: over 100 calls with this prompt, at least 90% of responses must satisfy these criteria.

No classic versioning. When the provider updates their base model (GPT-4 → GPT-4o, Claude 2 → Claude 3), your system changes, even if nothing was touched on the application side. And you may not know about it.

No classic debugging. Understanding why the model produced a particular response is not as simple as reading a stack trace.

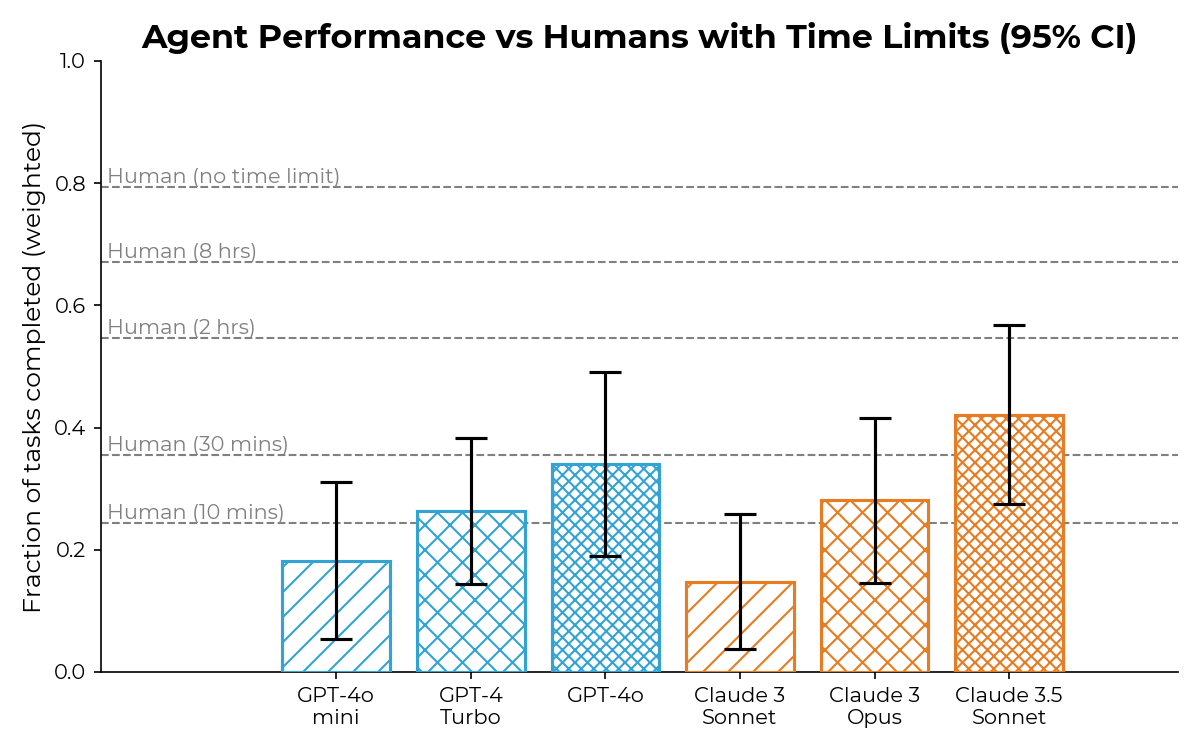

Source: METR, Evaluating Frontier AI (August 2024). AI agent performance on tasks requiring 30 min to 8h for a skilled human. The progression from 2019 to 2024 illustrates why LLMOps practices are becoming critical: models evolve fast, and the systems that embed them must keep up.

The disciplines to instrument continuously

Managing prompts as code

Prompt engineering is development. Prompts must be versioned, reviewed, tested and deployed with the same rigour as application code.

Concretely:

- Prompts are stored in the code repository (or a dedicated system like LangSmith, PromptLayer)

- Every prompt change goes through a review and an evaluation suite

- The deployment of a new prompt follows a CI/CD pipeline with automated tests

- The prompt version is logged with every production call

A prompt modified without these guardrails can silently degrade response quality for days before anyone notices.

Systematic evaluation (evals)

Evals are the LLM equivalent of automated tests. They are sets of test cases with evaluation criteria, run before each deployment and regularly in production.

Three families coexist. Criterion-based evals: the model must extract a date from a text, the extracted date is either correct or it is not, exactly measurable. Model-as-judge evals: a second LLM (often more powerful) evaluates response quality according to defined criteria (relevance, accuracy, tone, length); more flexible, but introduces its own variance. Human evals: a panel of evaluators scores responses according to a rubric; expensive, slow, but the only way to evaluate subjective dimensions.

What to measure:

- Rate of responses in the expected format (valid JSON, length respected)

- Rate of inappropriate refusals (the model refuses to answer when it should)

- Rate of detectable hallucinations (verifiable factual claims)

- Consistency across calls (same question, coherent response)

- Drift from a reference baseline

Observability in production

Monitoring an LLM in production goes well beyond latency and HTTP error rate.

Technical metrics:

- Time-to-first-token (TTFT) latency and total latency

- Number of tokens consumed (input + output), directly correlated with cost

- API error rate (timeout, rate limiting, context length exceeded)

- Cost per call, cost per user session, total cost

Quality metrics:

- Distribution of automatic evaluation scores on production calls

- Negative user feedback rate (if a feedback mechanism exists)

- Response length anomalies (abnormally short or long responses)

- Detection of off-topic response patterns

LLM calls are embedded in larger processing chains (RAG, agents, multi-step). Distributed tracing (LangSmith, Arize Phoenix, or OpenTelemetry with custom spans) allows you to reconstruct the full context of a problematic call.

Managing drift

Drift in LLMOps has three sources.

Model drift. The provider updates the underlying model. Even if the endpoint name stays the same (gpt-4), the behaviour may change. The only protection is pinning the exact model version (gpt-4-0613 rather than gpt-4) and having an eval suite that detects regressions.

Data drift. The distribution of user inputs changes over time. A model trained or fine-tuned on January data may perform poorly on July patterns. Monitoring the distribution of inputs (embedding drift) allows you to detect these shifts.

Prompt drift. The accumulation of prompt modifications can create undetected inconsistencies if each modification is not tested in the global context. Cumulative drift is more dangerous than any individual modification taken in isolation.

Cost management

The cost of an LLM in production is proportional to the number of tokens processed. A poorly designed system can generate costs ten times higher than initial estimates.

Main levers:

Caching. Responses to identical or very similar queries can be cached. LLM providers often offer prompt caching (system prompt tokens are not rebilled on each call).

Intelligent routing. Not all requests require the most powerful model. A router that directs simple requests to a less expensive model (GPT-4o mini, Haiku) and complex requests to the premium model can divide costs by 5 to 10.

Prompt optimisation. Verbose prompts cost more. A regular audit of prompts to remove redundant instructions reduces consumption without touching quality.

Batch processing. For non-real-time use cases (report generation, data enrichment), the batch API is significantly cheaper (50% reduction at OpenAI).

The deployment pipeline

The disciplines described above crystallise into a pipeline that every modification traverses: a new prompt, a model version change, a redesign of the RAG chain. The pipeline itself is not specific to LLMOps. What is specific is what gets checked at each stage.

Prompt and configuration. Starting point: a change versioned in the repository, peer-reviewed, deposited like any application code modification. No direct prompt modification in production, no untracked hot edits.

Automated evals. The change is run against a reference dataset. If the score stays below the defined threshold, iteration resumes: this is the fastest feedback loop of the system, and the most useful. Until evals pass, nothing moves further down the pipeline.

Shadow mode. The new prompt processes real requests in parallel to the existing one. Its responses are not served to users, but compared against the current behaviour on production cases. This mode detects silent regressions that automated evals do not cover: new input types, business edge cases, interactions with other components of the system. It is the last barrier before user exposure.

Canary deployment. A fraction of real traffic is routed to the new prompt: 5%, then 25%, then 100%. Each stage stays under monitoring for 24 to 48 hours before moving up. The metrics observed are both technical (API error rate, latency, cost per call) and qualitative (negative feedback rate, drift in production eval scores). A regression at any stage triggers a configuration rollback, which must be instantaneous.

Production and continuous monitoring. The new prompt becomes the reference state. Continuous supervision feeds the next iteration: what happens in production produces the cases that will enrich the eval dataset, and the patterns observed become the hypotheses for the next change.

This pipeline is a loop, not a line. Each release strengthens the evaluation and detection capabilities for the next. Without this loop, the disciplines described above become well-documented good intentions.

What the AI Act changes for LLMOps

For high-risk AI systems (decision-making systems in regulated domains such as finance, health, employment, justice), the AI Act imposes requirements that translate directly into LLMOps practices:

- Logging: retention of usage logs enabling a posteriori auditability

- Human oversight: defined human control points for high-impact decisions

- Accuracy and robustness: documented and maintained evaluation metrics

- Transparency: documentation of the system’s capabilities and limitations

These requirements are not incompatible with efficient operation, provided they are integrated from the design stage, not as an afterthought.

Conclusion

Putting an LLM in production without LLMOps practices is like deploying an application without monitoring or tests. It may work for a while, but problems arrive at the worst moment.

LLMOps practices are not inventions. They draw on what the DevOps world has developed over twenty years, adapted to the probabilistic specificities of language models. Teams with a solid MLOps culture already have half the journey done.

The other half is understanding what is fundamentally new: probabilistic evaluation, model drift management, and traceability in multi-step agentic systems.

General framework: Controlled AI, our doctrine for engineering AI systems in real-world environments.

Industrialising an AI use case in a critical business context, instrumenting evaluation, traceability and model drift: this is what we do alongside technical teams. Software development and managed services for trust-critical systems.